Defining Ownership in AI QA Workflows

Assign single-point ownership, auditable logs, and role-based governance to eliminate accountability gaps in AI-driven QA workflows.

Defining Ownership in AI QA Workflows

Defining ownership in AI-driven QA workflows is essential to avoid confusion, ensure accountability, and maintain testing integrity. With AI automating tasks like test creation and maintenance, roles in QA have shifted. Teams now face challenges like blurred responsibilities, accountability gaps, and limited visibility into automated processes. Here's what you need to know:

- AI's Impact on QA: AI simplifies test creation but disperses responsibilities across teams, making accountability unclear.

- Consequences of Poor Ownership: Unclear roles lead to missed bugs, production defects, and costly errors.

- Challenges:

- Overlapping roles blur accountability.

- AI-generated outputs are hard to validate manually.

- Lack of transparency in automated decisions creates "invisible agency."

- Solutions:

- Assign a single accountable owner per workflow or AI agent.

- Use evidence-based processes with auditable logs.

- Implement role-based governance with clear responsibilities for QA, development, and operations teams.

- Introduce checkpoints, metrics, and escalation paths to ensure oversight.

Platforms like Rock Smith address these issues with features like semantic targeting, real-time execution logs, and persona-driven testing. By defining roles and establishing clear accountability frameworks, organizations can tackle AI-driven QA challenges effectively.

Revolutionizing QA with the power of Agentic AI, with QA.tech’s Vilhelm von Ehrenheim

sbb-itb-eb865bc

Challenges in Assigning Ownership in AI-Driven QA Workflows

The transition to AI-powered testing introduces unique challenges, making it harder to pinpoint ownership compared to traditional QA setups.

Overlapping Roles and Responsibilities

AI-driven workflows blur the lines between QA, development, and operations. Each team has distinct roles: product teams outline goals and intent, engineering builds the systems, and operations ensures performance and scalability. But when an AI-generated test fails, these boundaries often collapse. For instance, product teams might not have the technical expertise to debug failures, while operations teams can't adjust AI prompts or evaluate business requirements. This often leaves engineering teams as the default owners, tasked with resolving issues - even at inconvenient hours - regardless of whether the root cause lies in product design, infrastructure, or the AI model itself.

This scenario creates what researchers call "ambiguous ownership", where teams debate whether failures stem from the AI system, product decisions, or infrastructure problems. When original teams disband, their successors may inherit systems without clear accountability. As Owen Chamberlain aptly notes:

"AI agents don't create accountability problems, they inherit them. When autonomous systems outlast the teams that built them, ownership disappears".

Such overlapping responsibilities lead to accountability gaps, especially when AI generates outputs faster than humans can review them.

Accountability Gaps for AI-Generated Outputs

AI-driven testing platforms produce outputs - like edge cases, test personas, and semantic targets - at a pace that far exceeds manual review capabilities. This creates a "responsibility vacuum", where human reviewers technically hold authority but lack the time or expertise to validate AI-generated results effectively. As a result, verification often becomes a superficial process, more about checking boxes than ensuring quality.

The data paints a clear picture. Code subjected to five or more rounds of AI-driven optimization shows a 37.6% rise in critical vulnerabilities. Teams may assume that each iteration improves quality, but AI refinements can introduce subtle issues - like insecure defaults or missing input validation - that automated tools fail to catch. By 2026, Gartner predicts that 40% of enterprise applications will incorporate task-specific AI agents, which will only magnify these accountability challenges. In multi-vendor setups, where workflows involve agents from different providers, tracing failures becomes even harder. Gartner also estimates that over 70% of large enterprises will require documented AI decision logs before approving autonomous systems, highlighting how traditional accountability frameworks are struggling to keep up.

The sheer speed and volume of AI outputs make transparency a critical requirement for maintaining oversight.

Limited Visibility into Automated Processes

Traditional testing logs provide basic information - like which tests ran and their results. But AI-driven workflows demand deeper insights, including the reasoning behind decisions. Without records of intent and state transitions, teams are left in the dark when incidents occur.

This lack of transparency leads to what experts term "invisible agency", where semi-autonomous systems make decisions without clear ownership. Many organizations already have AI systems in production that aren't explicitly labeled as "AI", yet these systems influence critical aspects like test coverage, risk assessment, and deployment readiness. The disconnect between team structures and the decision-making authority of these systems leaves crucial testing decisions in a gray area.

Fixing this issue requires more than just better logging. Organizations need immutable audit trails that capture which agent acted, what data it accessed, which policies applied, and where human oversight was involved. Without such transparency, teams risk shifting from delegation - where authority is granted but responsibility is retained - to outright abdication, where outcomes occur without any human accountability.

Resolving these challenges is key to building a clear and reliable ownership framework for AI-driven QA workflows.

Framework for Defining Ownership in AI QA Workflows

Establishing clear ownership in AI QA workflows hinges on defining precise principles and scalable structures. Below are the key elements that form the backbone of an effective ownership framework.

Core Principles for Ownership Clarity

The first and most critical principle is single-point accountability. Every AI workflow or agent must have one clearly identified human owner - not a team or committee. This eliminates the confusion that can arise from shared responsibility. Majid Sheikh, CTO at Agents Arcade, emphasizes this by saying:

"Ownership is not about credit. It's about accountability".

This accountability is supported by evidence-based processes. Every automated action must generate auditable logs, including prompts, tool calls, and inputs. These logs are essential for tracing errors back to their source - whether it’s the AI model, product requirements, or infrastructure. To maintain reliability, organizations should aim for a policy pass rate of ≥ 99% and ensure that kill-switches can be activated within ≤ 2 minutes.

Another key principle is tiered autonomy, where AI agents advance through carefully defined stages: Assist → Execute → Optimize → Orchestrate. Initially, agents operate in "shadow mode", suggesting actions for humans to execute. Only after meeting stringent quality standards do they progress to executing tasks autonomously within strict boundaries. This step-by-step approach ensures a controlled and gradual increase in responsibility.

Role-Based Governance Model

An effective governance model builds on these principles by dividing responsibilities according to expertise. Here's how roles typically break down:

- Product teams define intent and risk tolerance.

- Engineering teams handle orchestration and prompt logic.

- Operations teams focus on uptime and scalability.

This clear division is crucial. As Majid Sheikh points out:

"If it can wake someone up at night, it's not a feature anymore".

For instance, product teams may establish guardrails and success metrics, but they shouldn’t be responsible for handling late-night incidents. Engineering teams, on the other hand, must own production behavior, as they set the system's boundaries and manage its internal logic. Meanwhile, QA engineers take on a more strategic role. Instead of manual testing, they become "curators of baselines" and "authors of guardrails", defining what constitutes acceptable behavior and ensuring AI agents operate within those limits.

One B2B SaaS team successfully applied this model by integrating AI agents into 180 critical workflows. This approach reduced escaped UI bugs by 62%. They also streamlined triage processes by routing specific AI-generated differences to the appropriate domain experts, cutting triage time from 20 minutes to just 6 minutes.

To support this governance model, clear escalation paths are essential. Each AI agent should have a one-page policy registry that outlines its purpose, allowed tools, data access restrictions, and the appropriate contact for emergencies. Regular 15-minute "agent health" reviews allow teams to address incidents, monitor costs, and evaluate a sample of automated actions before minor issues escalate into major problems.

How Rock Smith Enables Ownership in AI QA Workflows

Traditional vs AI-Enhanced QA Ownership Models Comparison

Rock Smith is designed to embed ownership at every layer of testing, making it possible to create natural-language test flows that are accessible to a broader range of users. This approach addresses the accountability gaps often found in traditional QA processes.

Key Capabilities for Ownership Clarity

Rock Smith introduces three standout features that bring transparency and accountability to QA workflows:

- Semantic Targeting: Instead of relying on brittle selectors, Rock Smith uses visual descriptions like "blue Submit button" to adapt tests automatically as UI elements change. This shifts QA teams' focus from tedious maintenance tasks to higher-level strategic oversight.

- Real-Time Execution with AI Reasoning: The platform provides step-by-step screenshots and detailed logs of AI decision-making during test execution. This real-time visibility ensures QA teams can track and understand every action taken.

- Role-Based Access and Persona Testing: With role-based access control (RBAC), teams can assign permissions and conduct persona-driven testing to simulate distinct user journeys. Additionally, automated fuzzing handles 14 edge case scenarios, including boundary values, SQL injection, and XSS vulnerabilities.

Streamlining Workflows with AI Agents

Rock Smith's AI agents take over repetitive tasks, allowing QA teams to focus on strategic challenges. Its unique zero-transmission architecture ensures data security by executing tests locally on a desktop app while receiving instructions from the cloud. This setup gives teams full control over their testing environments and directly tackles the visibility and accountability issues common in traditional models.

The system also integrates human-in-the-loop workflows, combining the efficiency of AI with the judgment of human oversight. This ensures that a designated individual is responsible for final verification, maintaining accountability at every step.

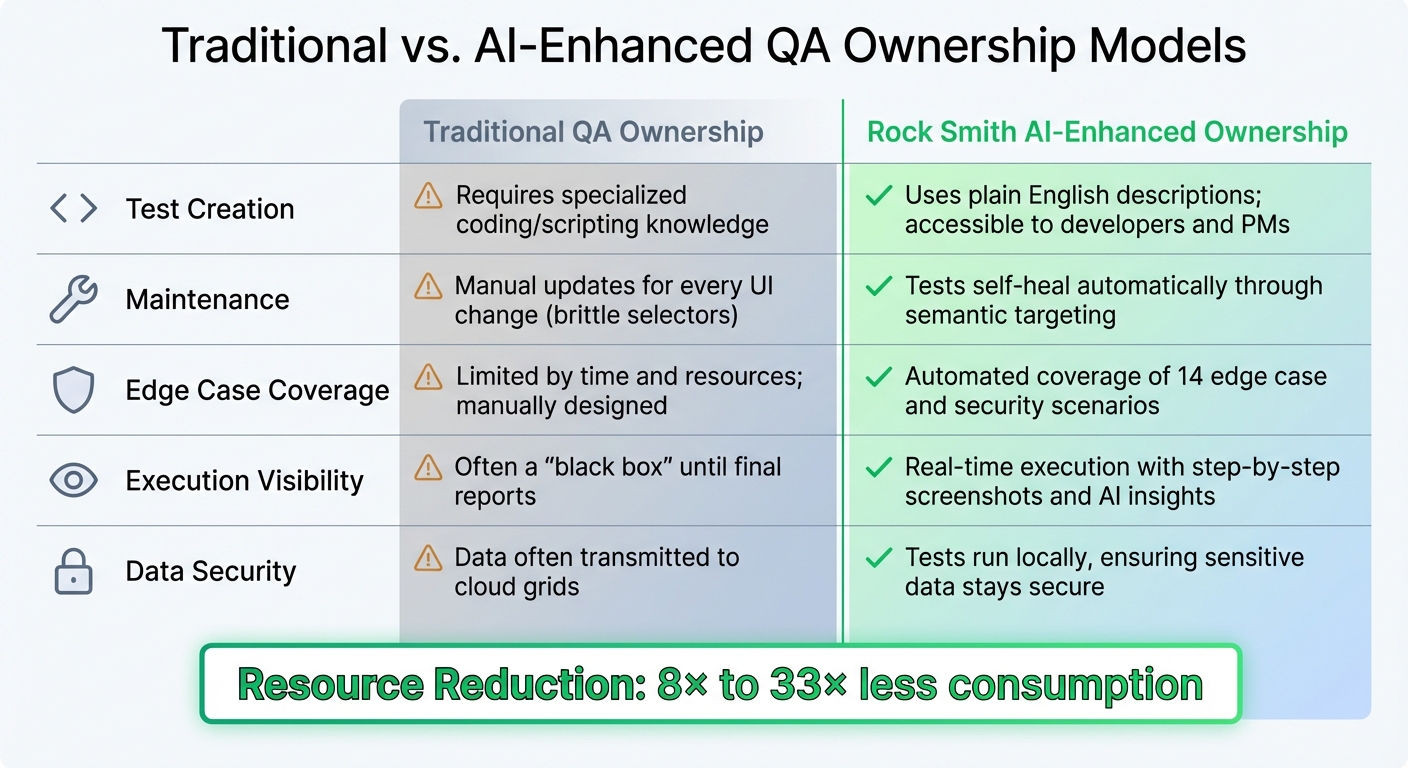

Comparison: Traditional vs. AI-Enhanced Ownership Models

Rock Smith redefines QA ownership by addressing the limitations of traditional methods. The table below highlights the key differences:

| Feature | Traditional QA Ownership | Rock Smith AI-Enhanced Ownership |

|---|---|---|

| Test Creation | Requires specialized coding/scripting knowledge | Uses plain English descriptions; accessible to developers and PMs |

| Maintenance | Manual updates for every UI change (brittle selectors) | Tests self-heal automatically through semantic targeting |

| Edge Case Coverage | Limited by time and resources; manually designed | Automated coverage of 14 edge case and security scenarios |

| Execution Visibility | Often a "black box" until final reports | Real-time execution with step-by-step screenshots and AI insights |

| Data Security | Data often transmitted to cloud grids | Tests run locally, ensuring sensitive data stays secure |

This transformation replaces the traditional horizontal layering - where frontend, backend, and testing operate in silos - with vertical integration. This structure empowers individuals to own features from requirements all the way to deployment. Studies suggest this shift can reduce resource consumption by 8× to 33×.

Steps to Implement Clear Ownership in AI QA Workflows

Mapping Roles to Rock Smith's Features

To address the common issue of overlapping responsibilities, it’s essential to clearly assign roles that align with specific platform features. Rock Smith's platform is structured around three main roles: QA Teams, Development Teams, and Business Leaders.

QA Engineers should take charge of Test Personas and Edge Case Generation. Assign specific team members to manage test personas, ensuring they consistently simulate diverse user behaviors. Additionally, designate a security-focused QA role to monitor the Edge Case Generation feature, which automatically tests for vulnerabilities like XSS, SQL injection, and boundary values across 14 different test scenarios.

Developers can oversee test implementation by using natural language to describe requirements, eliminating the need for advanced test automation skills. They should also manage Local Browser Execution to ensure sensitive data remains secure and local.

QA Leads and Managers should focus on the Quality Metrics dashboard. This tool helps track pass/fail trends and execution history, providing a centralized view of process efficiency and accountability. For automation specialists, the Semantic Targeting feature is key - it ensures tests adapt automatically to UI changes, reducing the need for manual updates to brittle selectors.

"Establishment of ownership with a clear responsibility assignment framework prevents rollout failure and creates accountability across security, legal, and engineering teams." - Miroslav Milovanovic, Knostic

Additionally, implement a process where human reviewers evaluate AI-generated suggestions before deployment. With these responsibilities mapped out, the next step is to establish governance metrics and checkpoints to ensure accountability.

Setting Checkpoints and Metrics

Once roles are defined, governance gates should be introduced to ensure every AI-driven decision meets strict criteria. These include acceptance standards, explainability requirements, and coverage thresholds before moving forward. Use the platform’s real-time execution logs as checkpoints for manually verifying complex test flows.

"If the AI can't explain its decision, it doesn't ship." - Applaudo

Create benchmarks using validated test scenarios to regularly assess the accuracy and reliability of AI-generated QA outputs. Focus on core metrics like Defect Detection Percentage (DDP) and Mean Time to Detect (MTTD) to measure team efficiency and accountability.

Schedule regular reviews - monthly or quarterly - to compare current performance against these benchmarks and evaluate improvements in accountability. Track the ratio of reported bugs to canceled bugs to gauge how well the QA team understands the business context. Organizations that implemented structured AI governance reported adoption rates increasing from 15% to 78% within just four months, underscoring the value of these checkpoints.

"Metrics act more like a compass - a tool to measure the quality level, identify gaps, and detect hidden problems, while also increasing transparency." - Amr Salem, Senior QA Lead

Tailor metrics to the audience: developers need detailed defect data, while project managers require high-level summaries of test completion. Identifying defects before production can save up to 10× the cost of fixing them after release, making these checkpoints crucial for both accountability and cost management.

Conclusion: Driving Accountability and Efficiency in AI QA Workflows

Clear ownership in AI workflows goes beyond merely assigning tasks - it eliminates the confusion that can arise when AI systems fail in production. When an AI-driven test produces a false positive or misses a critical defect, someone must have the authority to act immediately, without waiting for a committee decision. As Majid Sheikh, CTO of Agents Arcade, explains:

"If an AI agent can affect customers, money, or trust, it must have a single accountable owner in production. Not a committee. Not a shared spreadsheet."

Defining roles clearly enhances efficiency. Product teams focus on setting goals, while Engineering takes charge of implementation and incident response. This separation of duties avoids overlap and ensures smoother workflows. QA teams, in turn, can shift from being stuck in repetitive maintenance tasks to becoming a strategic part of quality decision-making. This role clarity creates the foundation for automation platforms like Rock Smith to deliver accurate and measurable results.

Rock Smith tackles visibility issues by offering real-time execution logs, step-by-step screenshots, and AI reasoning for every action, which allows teams to trace the root cause of failures. Its dashboard, metrics tracking, and local execution tools enable owners to quickly address key questions: What went wrong? Why did it happen? How can it be avoided in the future?. By embedding these features, Rock Smith ensures that every incident not only gets resolved but also contributes to better prevention strategies. This level of transparency turns routine testing into a proactive approach to quality assurance.

"Ownership is not about credit. It's about accountability. When an AI agent does something harmful, the owner must be able to answer three questions immediately. What happened, why did it happen, and how do we prevent it from happening again?" - Majid Sheikh, CTO, Agents Arcade

The solution lies in treating AI agents as dynamic services, complete with documented failure modes and built-in kill switches, rather than static tools. Assigning roles - such as QA for test personas and Developers for local execution - ensures accountability aligns seamlessly with operational efficiency.

FAQs

Who owns an AI QA workflow when something breaks in production?

When an AI QA workflow breaks down in production, there’s typically one person who holds responsibility. This individual oversees the system, handles issues as they arise, decides when processes need to be paused, and navigates challenges like changing prompts, models, tools, and policies. Having a clear point of ownership keeps everything accountable in such ever-changing workflows.

What audit logs are needed to show an AI agent's actions and decisions?

Audit logs need to document an AI agent's activities in detail. This includes executed commands, accessed data, tool usage, and outputs. To ensure transparency and accountability, logs should also include timestamps, user prompts, and reasoning chains. These elements are crucial for meeting compliance standards, simplifying debugging, and conducting thorough security reviews. By maintaining this level of detail, you create a reliable record of the AI agent's actions and the rationale behind them.

How do we decide when an AI agent can run tests autonomously?

Deciding when an AI agent should run tests on its own hinges on how much human oversight is built into the process. There are three common approaches to this:

- Human-in-the-loop: Humans are directly involved in every decision or action the AI takes, ensuring close monitoring and control.

- Human-on-the-loop: Humans oversee the AI's actions and can intervene if necessary but aren't involved in every step.

- Human-in-command: Humans set the overall direction and policies, while the AI operates independently within those boundaries.

Each model helps ensure accuracy, reduce errors, and maintain trust in the system. Defining roles clearly is essential to strike the right balance between automation and oversight.