How to Build Evaluation Workflows for AI Agents

Learn how to create efficient evaluation workflows for AI agents using Google Sheets to improve lead scoring and performance.

How to Build Evaluation Workflows for AI Agents

In the fast-evolving world of AI-driven workflows, ensuring accuracy and efficiency is crucial - especially when working with AI agents designed to automate complex tasks. One critical aspect of improving performance lies in creating evaluation workflows that help identify discrepancies, optimize processes, and reduce time wasted on flawed outputs. This article explores how to design and implement evaluation workflows for AI agents, enabling engineering and product teams to refine performance and build reliable systems.

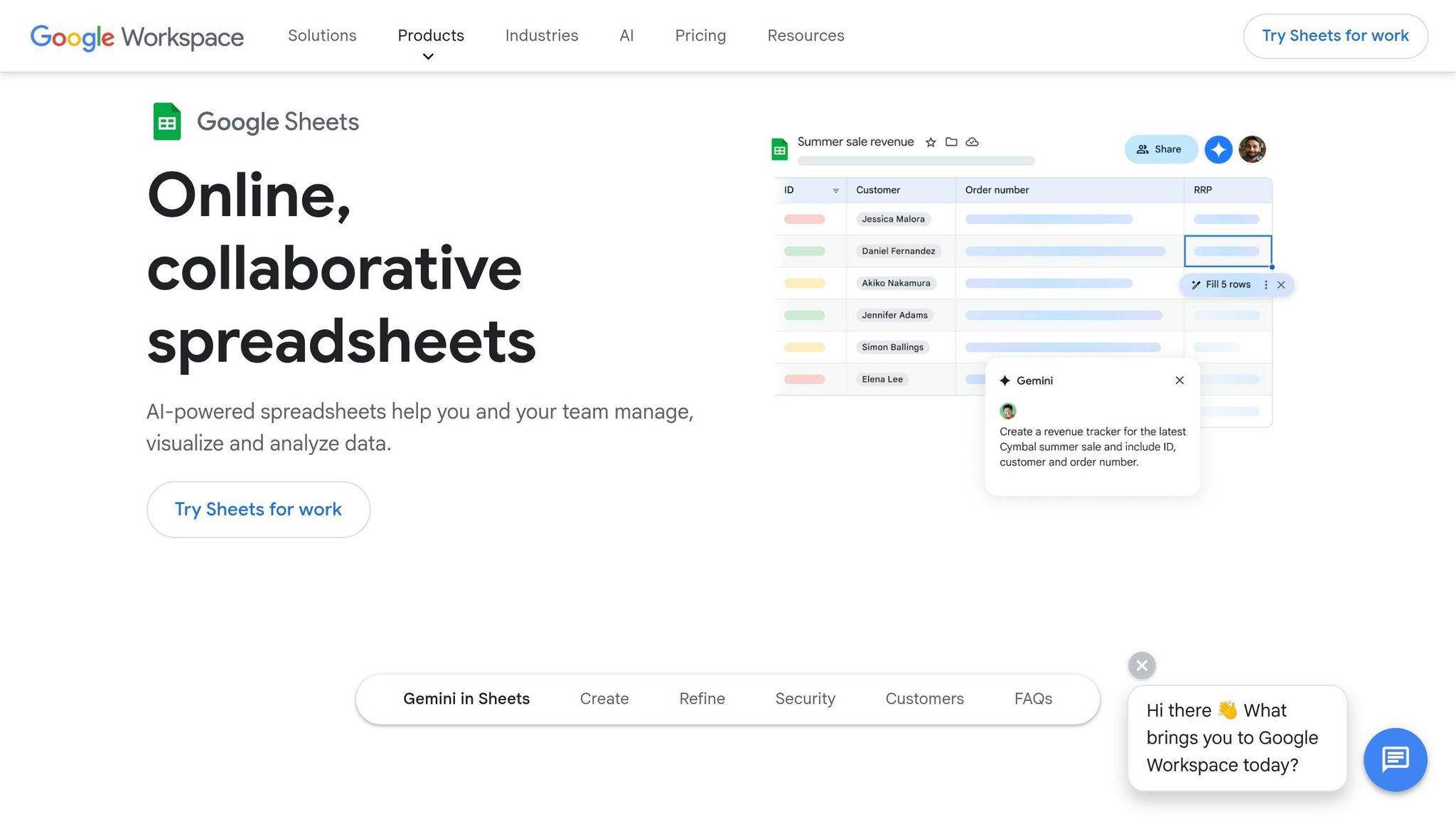

By following a systematic approach using tools like Google Sheets and AI-driven models, you can structure your evaluation process to mimic a real test suite, ensuring consistent and measurable results. Let’s break down the process step-by-step.

Understanding the Role of Evaluation in AI Workflows

The underlying challenge in working with AI agents - whether for lead scoring, decision-making, or other purposes - is that incorrect outputs can lead to inefficiencies and wasted resources. For example, a lead scoring model providing skewed results might prioritize the wrong leads, misallocate efforts, and negatively impact downstream operations.

An effective evaluation workflow acts as a safeguard, enabling you to systematically test your AI models against expected outcomes. This creates a feedback loop where discrepancies can be identified, analyzed, and corrected, leading to gradual performance improvements.

sbb-itb-eb865bc

Step-by-Step Guide to Building Your Evaluation Workflow

1. Prepare Your Data in Google Sheets

Start by organizing your data in a structured format within Google Sheets. Create columns for key evaluation markers such as:

- Expected Score: The target output based on predefined criteria.

- Actual Score: The score generated by your AI agent.

- Expected and Actual Categories: Classifications to compare anticipated versus delivered results.

This setup ensures that your data is ready for evaluation and serves as a clear test case.

2. Set Up Workflow Triggers

Use tools like N8n or similar workflow automation platforms to establish triggers. For Google Sheets, you can configure a trigger that activates the workflow whenever new data is added to a specified row.

3. Create Separate Evaluation and Production Paths

To ensure clarity and scalability, divide your workflow into two distinct processes:

- Evaluation Path: Simulates test conditions, allowing you to evaluate the AI agent’s performance without impacting production data.

- Production Path: Processes real data for actionable results, such as sending emails or triggering downstream actions.

This separation ensures that testing can occur independently, reducing the risk of errors in live environments.

4. Integrate AI Agents for Scoring

Add an AI agent node to your workflow. Use a structured prompt to define how the agent should evaluate inputs. Include details such as:

- Name

- Company

- Job Title

- Lead Source

- Budget

- Notes

You can also implement output parsers to enforce a specific format, ensuring uniformity in evaluation results.

5. Check for Discrepancies

Once the AI agent processes the data, compare the actual scores and categories against the expected ones. For instance, if a lead is expected to score 4 but receives an 8, this discrepancy signals a potential issue with the scoring algorithm or input data.

6. Adjust and Iterate

Based on the discrepancies identified in the evaluation workflow, refine your AI agent’s prompts or parameters. Testing and fine-tuning are iterative processes, and continuous improvement helps align outputs with expectations over time.

7. Finalize the Production Workflow

After ensuring the evaluation workflow functions as intended, configure the production path to update Google Sheets with real-time outputs or trigger further actions, such as sending summary emails or integrating with communication tools like Slack.

Best Practices for AI Evaluation Workflows

1. Automate Data Validation

Incorporate automated checks to ensure that input data matches predefined formats or criteria. This minimizes errors entering the workflow.

2. Use Structured Prompts for Consistency

Defining clear and structured prompts for your AI agent ensures that outputs are consistent and aligned with your evaluation framework.

3. Leverage Scalable Models

Choose AI models capable of handling your workload’s complexity (e.g., OpenAI’s GPT-4.1 for larger datasets). If a model struggles with structured output, consider upgrading to a more capable version.

4. Monitor Workflow Outputs Continuously

Regularly review evaluation results to identify trends, patterns, and recurring issues. This allows you to proactively address bottlenecks.

5. Collaborate Across Teams

Ensure open communication between engineering, QA, and product teams to refine workflows collaboratively. Shared insights can drive faster improvements.

Key Takeaways

- Data Preparation is Key: Organize your data in Google Sheets with clear columns for expected and actual values.

- Separate Testing from Production: Build distinct paths to ensure evaluation workflows don’t interfere with live data.

- Leverage Structured Prompts: Use precise prompts and output parsers to guide your AI agent’s behavior.

- Iterate for Continuous Improvement: Identify discrepancies and refine workflows repeatedly for better accuracy.

- Automate Where Possible: Use tools like N8n to trigger workflows and reduce manual effort.

- Choose the Right AI Model: Models like GPT-4.1 perform better with structured outputs; adjust as needed.

- Validate Results Regularly: Compare expected vs. actual outputs to ensure alignment and optimize performance.

- Focus on Actionable Insights: Use the outputs to make informed decisions, such as prioritizing leads or improving prompts.

Conclusion

Building evaluation workflows for AI agents is an essential step in ensuring their reliability and performance. By creating a structured process, engineering and product teams can systematically test, identify, and resolve discrepancies in their AI models. This not only improves the quality of outputs but also saves time and resources by eliminating guesswork.

With the right tools, practices, and iterative improvements, you can transform your AI workflows into robust, production-ready systems that drive efficiency and innovation in your organization. Focus on data preparation, workflow separation, and continuous refinement to unlock the full potential of your AI agents.

Source: "Stop Skipping Evaluation: Build Better AI Workflows" - Tyler AI, YouTube, Feb 8, 2026 - https://www.youtube.com/watch?v=btbg8lRMAiY